The Predictiveness Study

What can our current predictive metrics tell us about future team performances?

Why (Did I Do This)?

In the fall of 2021, I was listening to an episode of the Double Pivot Podcast that focused on early-season expected goals (xG) numbers in the Premier League when a comment from Mike Goodman – one of the hosts – caught my attention. Speaking about the predictive capability of xG, he said:

“I think that the thing that has always sold xG is that it is better than anything else we have at predicting the future, right? Like, the bottom line for this statistic is that big picture, over a number of games, it is fairly predictive of – it is more predictive of future goalscoring than goals, or shots, or shots on target, or any number of other statistics, and it is fairly stable, meaning it is fairly predictive of itself. And, honestly at just around five games if you look at the math, is when those two predictive qualities begin to assert themselves. And then by the time you get to ten games, they are more than half, right? Like, you can look at it and you can say ‘more than half of these numbers is signal and less than half is noise’ at somewhere around the ten game mark.”

There’s two different ideas to touch on here. The first is that xG shows itself to be noticeably better at predicting outcomes than actual goals (which I’ll be abbreviating as aG) around the five game mark. When it comes to comparing the predictive capabilities of xG and aG, though, the theory isn’t quite consistent. There’s a post from American Soccer Analysis (ASA) commenting that you need about seven matches’ worth of data before xG starts outperforming other metrics. At separate times and from separate sources, I’ve seen numbers for this concept ranging from four matches to eight, which I feel is a fairly wide range.

The second idea is that after ten matches, more than half of what we can read from the xG data is actually predictive. At the time of that episode, the Premier League was five matches into the season. On a later Double Pivot episode, eleven matches into the season (or basically around ten matches), Goodman’s co-host, Michael Caley, restated this in different words:

“The sort of baseline is that around this period in the season, the xG difference is explaining about 50% of the variance in future goals, future points, etc.”

This explanation is more mathematical in nature, and my brain understands the concept more clearly when cast this way than the signal/noise paradigm.

What Caley’s talking about here is the coefficient of determination, or the R2 value. He did a lot of work in the early days of analytics blogging to develop our understanding of xG by studying the relationship between various predictive metrics and the actual outcomes, which you can check out here, here, and here. In the third link, you can actually see in the correlation plots that R2 for xG reaches about 0.5 (or 50%) after ten matches. If you squint a bit, you can also see that xG starts to separate from the other metrics after around five matches, which goes back to the first idea.

At that time, Caley’s studies were around seven or eight years old, so I wanted to do something similar using more recent xG data. That wasn’t the only reason, though: I was hoping to understand these ideas on a deeper level, to really get at the math behind the concepts. I also wanted to see for myself how xG performed against the more entrenched aG, and at what number of matches the former would actually start to distance itself significantly from the latter. So, I did a study of my own that led to a technical paper, which thus far has lived solely on my laptop. This post is an attempt to get a somewhat abridged version of it out into the world.

I used teams’ aG and xG values over varying numbers of matches to predict their future aG and xG values, also over varying numbers of matches. I expected the results to align with the conventional wisdom (at least, conventional within the analytics community) that xG is better at predicting future performance than aG, but I was more interested in seeing exactly how much better xG performs, and how much it can actually tell us. I think understanding this last concept – exactly what we can glean from any individual statistic – is crucial for anyone trying to conduct analysis using these metrics.

How (Did I Set This Up)?

Caley’s study from 2015 looked at how well a varying number of matches predicted team performance over the following 20 matches, even going across seasons. I chose to keep the range of matches confined to a single season, but I varied both the number of matches used for prediction (n_prediction) and the number of matches being predicted (n_outcome).

I’m referring to each set of matches for a single league and season as a “league-season,” and every individual team’s matches within a season as a “team-season.” I generated results for each team-season, within each league-season.

I started by identifying every possible combination of n_prediction and n_outcome, along with every starting point for a given combination. So, at any point in the season, I could use team performance over every possible number of past matches (going back to the season’s start) to predict team performance over every possible number of future matches (going up until the season’s end). I measured team performance through goal difference, calculated on a per game basis using both aG and xG.

I then fed these numbers into a linear regression model to assess how well the goal difference over n_prediction forecasted the goal difference over n_outcome. I assessed the quality of the prediction using the coefficient of determination (R2) and the root mean square error (RMSE). Doing this using aG and xG for both the past and future matches led to four separate cases:

Using past aG to predict future aG (aG from aG)

Using past aG to predict future xG (xG from aG)

Using past xG to predict future aG (aG from xG)

Using past xG to predict future xG (xG from xG)

I had access to match results and xG totals from FBref (the xG numbers were provided by StatsBomb at the time) from 2017-2018 to 2020-2021 for every match in Europe’s top five leagues: the Bundesliga, La Liga, Ligue 1, the Premier League, and Serie A. There were 20 league-seasons in total, all with complete datasets except for the 2019-2020 Ligue 1 season, when the league abandoned the season due to the COVID-19 pandemic. The 2021-2022 season was still in progress when I did this work, which is why it’s not included in this study.

What (Did I Discover)?

I came up with a visualization that displays the results in a qualitative manner, and I’m using the term “error map” to describe it. It’ll allow us to see some of the overarching trends in the data before getting into the numbers. This graphic provides the basic template.

Error maps show all the values for an error metric (R2 or RMSE) projected onto a two-dimensional grid, with lighter values corresponding to larger numbers. This means that for R2, lighter colors show stronger correlations; for RMSE, darker colors are better since they indicate smaller errors. Each map has four triangular regions corresponding to the four cases outlined above. The smaller numbers of matches are near the center of the map; n_prediction increases in the outward vertical direction and n_outcome increases in the outward horizontal direction.

So, the outermost diagonal (the hypotenuse of a triangular grid) represents combinations of n_prediction and n_outcome that add up to 38 – the maximum number of matches in a team-season. Moving inward, each subsequent diagonal consists of fewer and fewer matches; by the time we reach the right angle of the triangular grid near the center of the map, we’re using one match to predict the next.

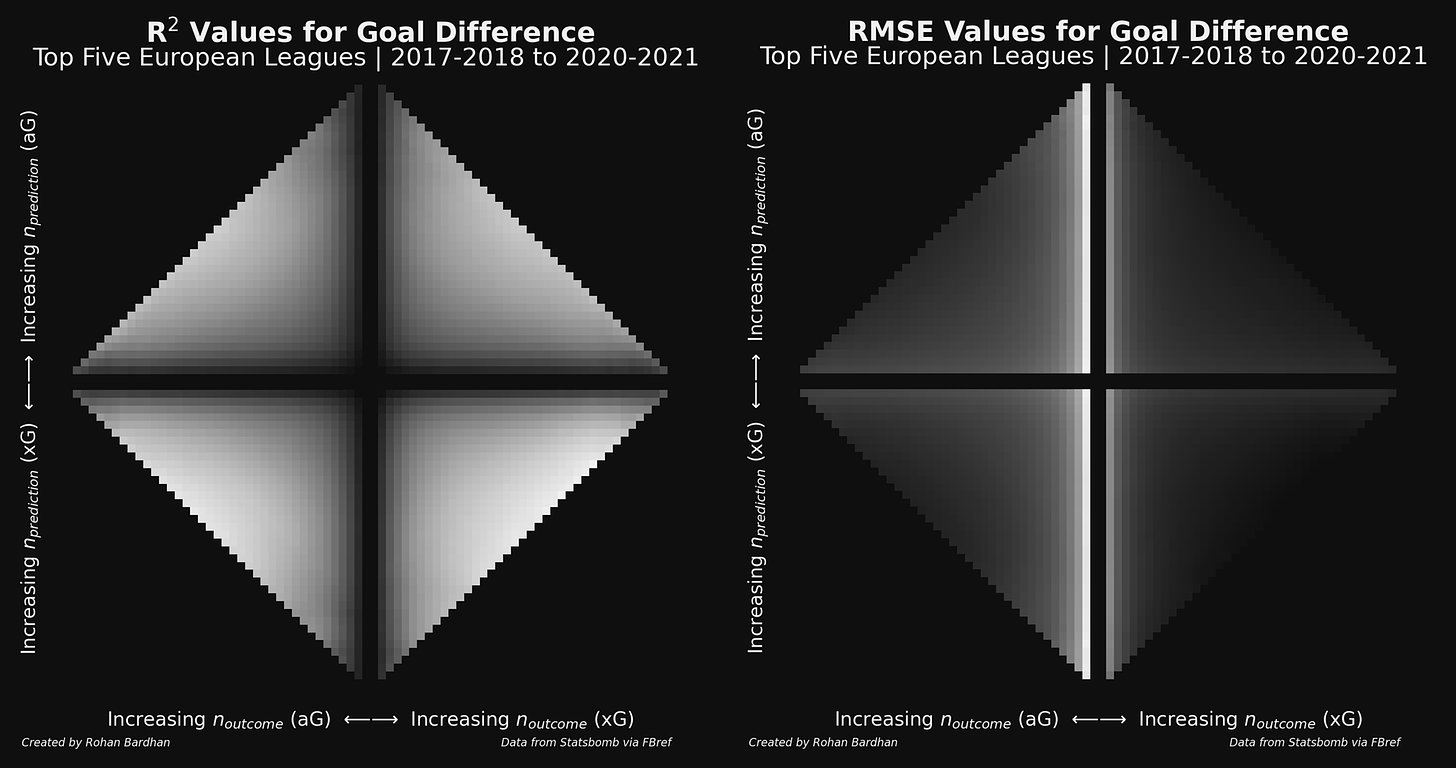

Here are the error maps for all of the top five European leagues, covering every season from 2017-2018 to 2020-2021.

The first thing I want to point out here is that we get the best results around the same area for all four cases: midway along the outermost diagonal. At this point we’re using an entire season’s worth of matches, and n_prediction and n_outcome are about equal. I think it’s fairly intuitive that having more matches to work with is going to lead to better predictions, so it makes sense that the strongest correlations (lighter colors in the R2 map) and lowest errors (darker colors in the RMSE map) occur along the hypotenuses. The best predictions occur in the middle areas because it’s there that we achieve the optimal balance between n_prediction and n_outcome – as we move away from that point, we’re decreasing one despite increasing the other.

The second takeaway from the error maps is a general confirmation of the hypothesis that xG is better than aG at predicting future performances. You can see that the two cases that use xG for predictions (the bottom two) have better correlations and smaller errors than the two cases using aG for predictions (the top two). The best performing case is xG from xG, confirming the idea that xG is the more stable metric. It’ll become more obvious when we get to some quantitative results, but the general trends are still visible here.

I want to take a moment to explain why the RMSE map isn’t symmetric, with disproportionately large errors at small values of n_outcome (the light colored vertical stripes). When we use small numbers of n_prediction or n_outcome, we have more data points to use, but there’s also more variance in the goal difference values. The variance decreases as we increase the number of matches – meaning that the spread of goal difference values is a lot smaller – but we get fewer possible combinations of n_prediction and n_outcome, or fewer data points. Now, RMSE indicates by how much the goal difference predicted by the linear regression model differs from the actual goal difference – in other words, it’s only focused on the outcome values. It picks up the reduction in noise when we increase n_outcome (moving horizontally on the map), but it doesn’t care if we increase n_prediction (moving vertically on the map) because the goal difference over the predictive period doesn’t go directly into the RMSE calculation.

I’m not sure this is anything more than a statistical quirk – at least, it doesn’t impact the primary takeaways from the maps. I do think it’s useful, though, as a warning regarding the instabilities that are present when dealing with small sample sizes.

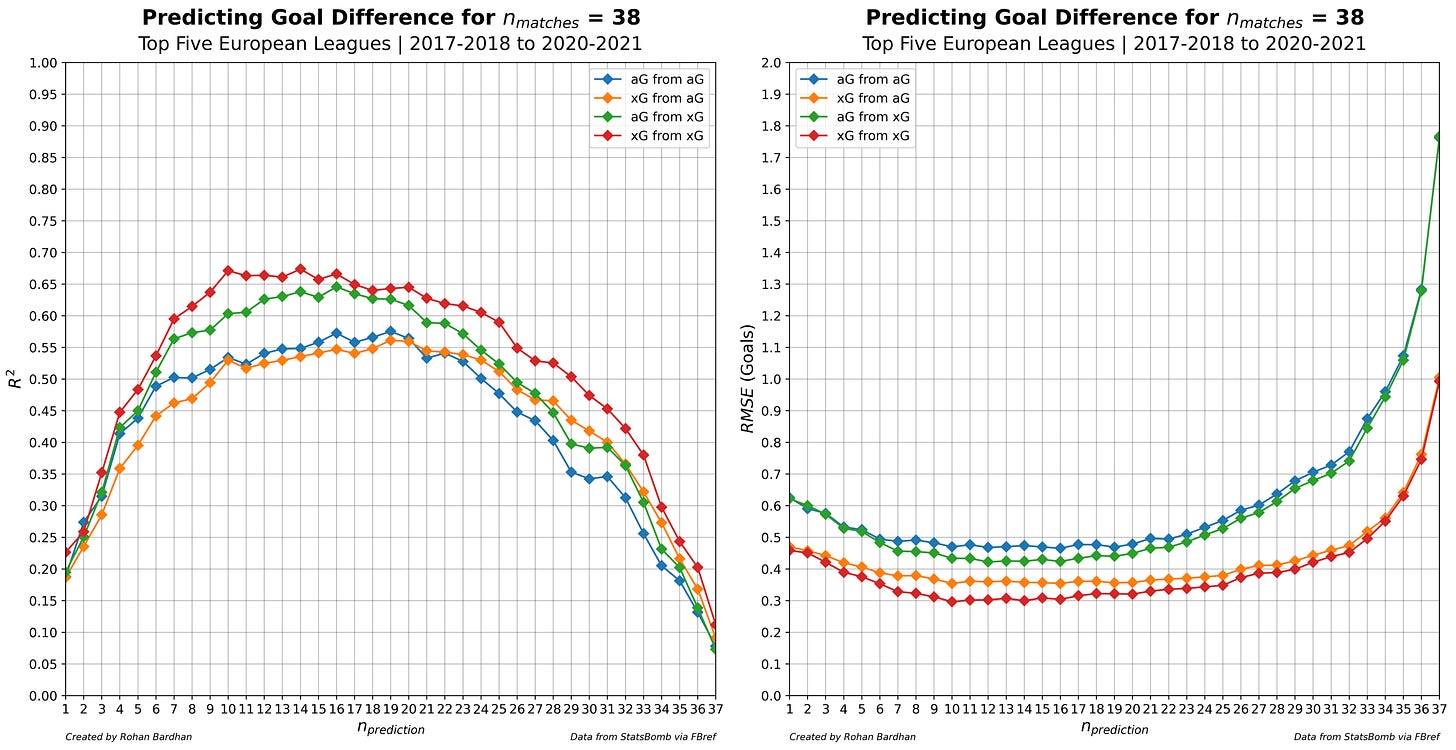

Let’s put some numbers on these ideas. I’m going to walk through some plots, which you can think of as subsets of data that I’ve lifted from the error maps. For example, these first two plots show the information contained in the outer diagonals of the error maps, where we’re using all of the matches in a team-season.

I generated these plots from the dataset for the top five European leagues, but since the Bundesliga teams only play 34 matches in a season, data from their seasons doesn’t make it into these plots. The same is true for the shortened Ligue 1 season. Don’t worry, though – information from these seasons will be included in later plots.

I want to focus on the blue and green lines – representing the two cases where we’re predicting aG (from aG and xG, respectively) – when discussing these plots. These represent the more practical application of the study, since I’m trying to understand how well we can forecast the actual team performances in the future with each metric. The other two cases (the orange and the red lines) are useful for demonstrating the stability of xG as a metric, but I’m not going to get into as much detail on that in this post.

The R2 plot shows that the maximum correlation is noticeably higher when using xG to make predictions than when using aG, indicating once again that xG is a better predictor of outcomes than aG. This distinction becomes clear around the seven game mark (remember, refer to the blue and green lines), which is right in the range of values that I expected. xG reaches a point where it’s predicting 50% of the variance after about six matches, while it takes seven for aG to do so. xG peaks around 65% after 16 matches whereas aG only maxes out around 58%, somewhere in the range of 16 to 19 matches.

At most, the difference between xG and aG is about 10%, which is…not all that much. It’s not like 65% is particularly great when it comes to forecasting performances, either. I see this as something of a cautionary note regarding xG – it tells us more than aG, but not so much more that we shouldn’t approach it with a healthy skepticism. That being said, the fact that it’s more stable indicates that there’s value in looking at underlying performances rather than just goalscoring rates.

I don’t have too much to say here about the RMSE plot. The errors are smaller when using xG to predict outcomes than aG, so xG once again does better. I mentioned above that RMSE is focusing on outcome values – that’s why the two cases predicting future xG have smaller errors than the two cases predicting future aG. The drastic increases that occur at high values of n_prediction come from the phenomenon I discussed earlier: RMSE cares about outcome values.

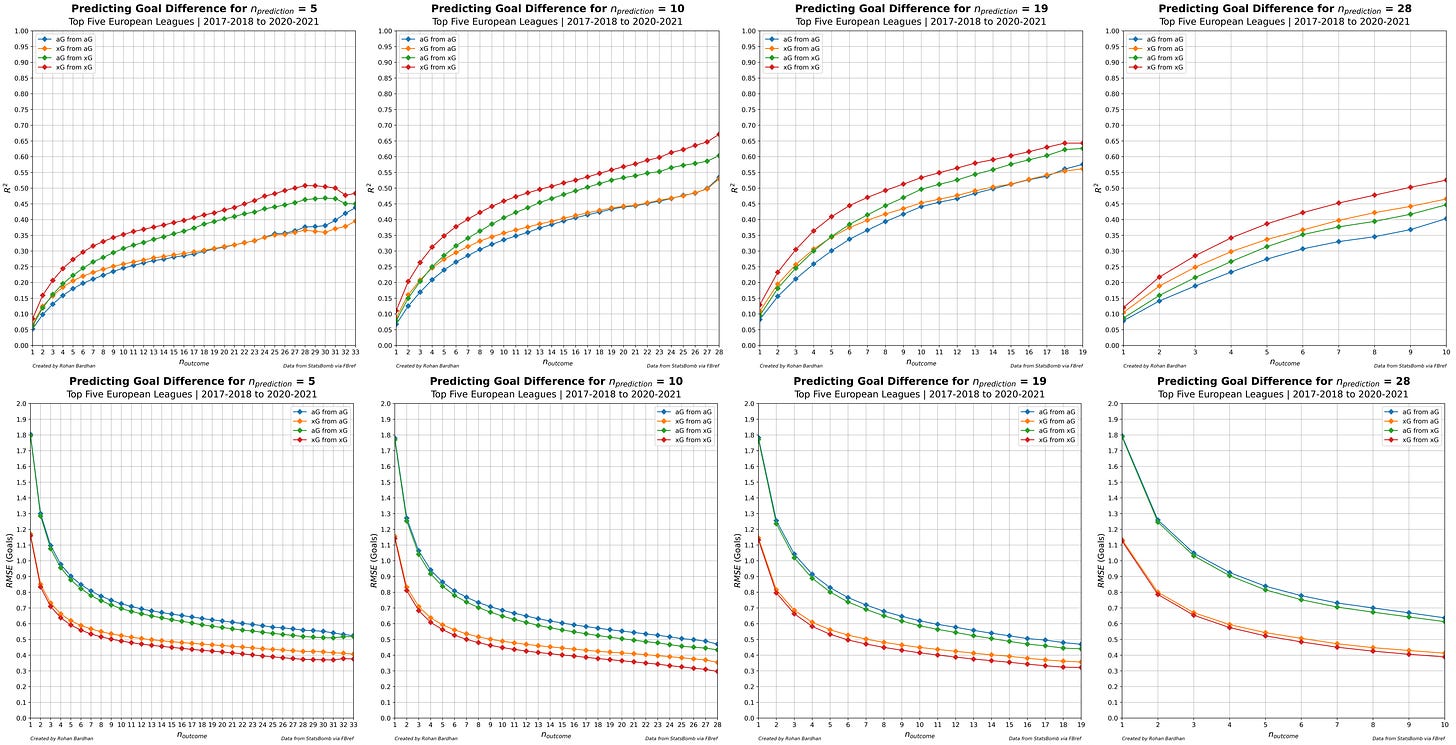

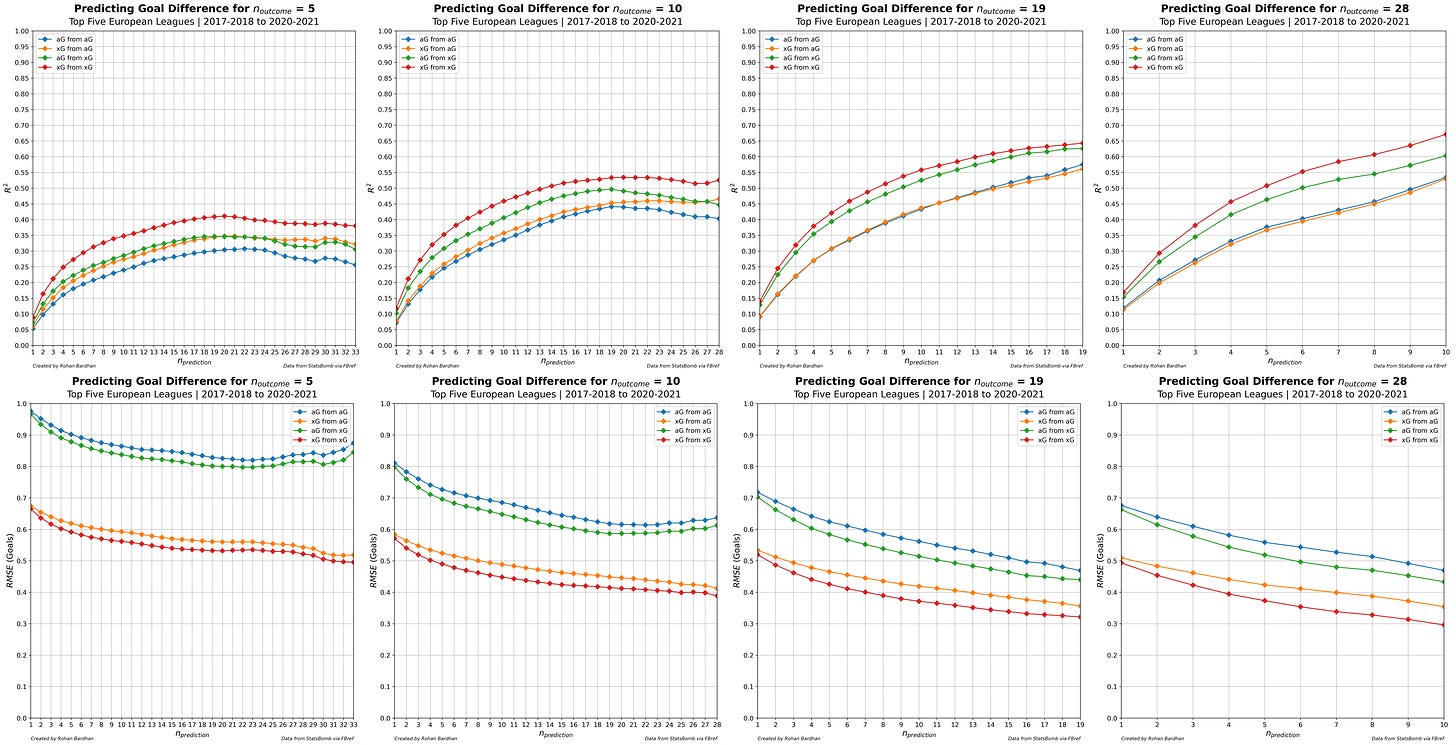

Continuing in the vein of plotting certain elements of the complete results in the error maps, I generated additional plots by fixing n_prediction and n_outcome in turn. Visually speaking, this means plotting each row or column from the map, for each case. This resulted in a large number of plots, and I’ll show them here for 5, 10, 19, and 28 matches. Unlike the previous plots, which are limited to sets of data that cover a full season, these include subsets of matches. As a result, they have many more data points, leading to smoother curves. Also, we’re getting the additional data from the Bundesliga seasons and the Ligue 1 2019-2020 season.

We’ll start with the R2 and RMSE plots for fixed values of n_prediction (i.e., individual rows on the error maps).

Let’s get the obvious conclusion out of the way: xG does better than aG – though again, the improvement xG offers appears to be at most 10% (between blue and green) on the R2 plots. The RMSE plots also show improvement in this regard, but it’s marginal. The larger difference is based on whether we’re predicting aG or xG rather than what we’re using to make those predictions, which goes back to the idea that RMSE is focused on the outcome values.

For whatever sample size of matches we have, plots like these tell us how well that sample will predict any number of future matches. Having five matches’ worth of data will provide less information than 10 – regardless of whether we’re using aG or xG. 19 matches will tell us more than 10 matches, but not as much more as going from five matches to 10, even though we’ve nearly quadrupled our initial sample size. We stop seeing significant improvements altogether beyond 19 matches (the halfway point for most seasons), probably due to the reduced number of available outcome matches.

Plots with a fixed n_outcome highlight this phenomenon.

On each individual R2 plot, adding another match to our predictive set provides diminishing returns on the predictive capability that we gain, and we approach some theoretical limiting R2 value (which still appears to be consistently better for xG than for aG). At higher values of n_outcome, though, it becomes harder to see that value because we’re limited by the total number of matches. It’s possible that if we could go up to, say, 50 predictive matches and 50 outcome matches, the limiting R2 value could be as high as, I don’t know, 80%. On the other hand, adding more matches would bring into play a bunch of additional variance outside of xG and aG, as there would be more opportunity for changes in squads and managers to shift the level of a team. That’s part of the reason why I didn’t predict across seasons for this study in the first place.

I’ll also call out how much the ranges of RMSE values shift from plot to plot, a feature not seen in the previous plots where we fixed n_prediction. This again comes down to RMSE’s focus on the outcome matches. Regardless of the overall magnitude of the values, though, it’s clear that adding additional matches to a set of n_prediction for any defined n_outcome reduces the error by at most 0.2 goals. I don’t have a great feel for what that means in a practical sense, but it doesn’t seem like there’s that much of a reduction in error for each individual match that we add.

Where (Do We Go From Here)?

I think these results provide some valuable insight into the predictive capabilities of aG and xG. Although it’s clear that xG outperforms aG pretty much across the board, the improvements from using xG aren’t really more than 10% in the R2 value. Considering the volume of data that I used to generate these results, it’s hard to be certain that we’ll see the same results on an individual team level – the real-world use for these numbers in the first place. It’d be useful to see how these results vary when we filter for individual leagues, seasons, and even teams. This would give us some more insight into how confident we should be when trying to apply our large-scale results to smaller datasets.

One of my key takeaways from all of this was that we shouldn’t have too much confidence in any individual predictive metric; we should take everything with a grain of salt and look to add context. That being said, there are still ways we could adjust the study to improve our confidence level; in other words, shift the R2 curves higher. For example, I could remove results spanning the break enforced by the pandemic during the 2019-2020 season, bracketing the two distinct periods of that season as though they were individual seasons themselves. I could also try to factor in managerial changes and see if that improves the results. On the xG side of things, there’s also some potential in comparing different models.

Beyond that, I’m curious about how these results would look for leagues with a lower talent level than the top leagues in the world. MLS would be a good candidate for this, and not just because there’s less quality – the structure of the league enforces a degree of parity that we don’t see in Europe. Maybe I could bring in some data from the Championship as well, to look into this second idea.

I used goal difference as a measure of team performance in this study, but it’d be interesting to look at goals scored or goals conceded (both actual and expected), or even points, to see if they lead to better predictions. It’d also be worthwhile to see how various metrics predict other metrics, mixing and matching all the combinations.

Who (Else Is Thinking About This Stuff)?

Those of you who are plugged in to soccer analytics may have noticed this post from ASA last July. Their Replication Project is more or less the same thing I was attempting here. Now, to be clear, I did all my work before they published it; the similarity between our work is pure coincidence (I even have an email timestamp handy to beat the plagiarism allegations).

I’m not an expert in this stuff, by any means. A large part of this study involved me fumbling in the dark as I tried to figure out how to generate the data I wanted, format the results into the error maps and plots, and actually interpret said results, all while wrestling with Python. That’s why it was gratifying to see a respected pillar of the soccer analytics community publish something like this: I saw it as a validation of my own methods.

One of my main takeaways from this study was the idea of uncertainty – even with the best metrics, there’s still a lot of variance in the predictions we’re making. I tend to focus on acknowledging that there are lots of things we don’t know, and that we aren’t even aware of all of the things we don’t know. This was the perspective through which I made my Premier League and World Cup predictions (shameless plug), and I’ll continue to try and approach football through this lens. We should always be aware that the information we’re working with has limitations and should conduct our analyses accordingly.